Shared Reality – Multi-modal Control and Manipulation of 3D Objects in Shared Space for Collaboration

The advent of telephony brought people together from afar to converse as if they were right next to each other. The Internet, a century later, allows people to remotely share documents and data, in audio, visual and text forms, further promoting the growth of human participation in remote collaboration. A face-to-face collaboration, nevertheless, often involves handling, i.e., manipulation and control, of objects, be it a pen, a device, or a machine. For example, a lecturer may circulate a device to let the audience or students feel and operate it for a better understanding of the subject. As is well known in education, this “hands-on” experience is a very critical part of the knowledge transfer process. Therefore, one may view the enabling of remote object handling, control and manipulation to be the last challenge in the evolution of telecommunications, from telephony to tele-collaboration.

Our research is motivated by this challenge and formulated to develop the theory and associated technologies that will support rapid growth of human activities in remote collaboration by providing support for joint control and manipulation of physically modeled objects in a shared space (thus “shared reality”) and the ability to create natural interactions between human collaborators (often an expert and a novice) for effective transfer of information and knowledge, far beyond today’s simple sharing of documents and data. The research thrust of this joint project between Rutgers University and the Georgia Institute of Technology consists of:

- Development of the theory and practice of object control in macro-kinematics and micro-kinematics in a simulated physical space;

- Development of an object-dependent data structure capable of supporting macro- and micro-kinematic control of objects by human users via motion-tracking and speech interfaces;

- Synthesis of a shared virtual environment that emulates the real world (the one the collaborators are in individually and remotely), with an accurate sense of visual reality;

- Real-time capturing, acquisition and registration of the real world environmental parameters with those of the shared reality environment; and

- Development and demonstration of a working system that supports deep interactions between an expert and a novice to achieve a sophisticated collaborative task objective that requires manipulation and handling of a range of real and virtual 3D objects.

The goal is to provide an unprecedented system and interface capability to allow individuals to control, handle, assemble and disassemble real objects, via remote collaboration and reality augmentation, to complete a designated task. Such a task may be otherwise difficult to complete without the enhanced capability.

This research encompasses intellectual merit along several dimensions. First, it pushes the scientific boundary of synthesis of a virtual world beyond modeling and visualization to control and manipulation. It extends the use of existing information technologies such as motion tracking systems and haptic devices to a new level where unprecedented capabilities can be demonstrated for a broad range of applications. Second, this research will lead to knowledge and methods that allow real-time capturing and registration of the environment so as to adapt the corresponding virtual world, an essential capability in many real world applications involving un-predicted and unforeseen situations. Third, it proposes a unique innovation to integrate multi-modal control and computer-aided micro-kinematics, which augments the precision in object manipulation beyond that of existing motion tracking devices, thereby providing the needed dexterity.

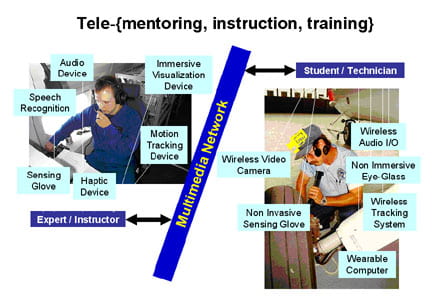

Potential applications of the result of this research are far-reaching and almost limitless. In education, the resulting technology will support new modes of instruction when physical equipment is involved, a situation quite common in vocational schools. In industry, it can be used for remote diagnostics and maintenance of expensive equipment and plants. In electronic commerce, it allows customers to play with and try out consumer devices without actually having to purchase them or to go to a specialized store to get a good ‘look-and-feel’ of a new piece of equipment. And in military applications, the system will find extensive use in field repair instructions and training. In medical rehabilitation, it allows a patient to receive prompt assistance instead of mere verbal instruction. The figure below illustrates an example of collaboration over shared reality involving an aircraft technician in the field inspecting the equipment and attempting to repair any defects, and an expert engineer who is connected via a multimedia network to provide expert knowledge and advice and to demonstrate handling of the dynamic situation as it arises. Note the various devices that these collaborators may wear.

The results of this research will have broad impact along a number of dimensions, most notably:

- Social Collaboration with one or more associates over a distance (and possibly over time) without constraints;

- Mobility (or mobile working environment) without any compromise in productivity; and

- Organization of and access to a broad range of resources via natural human-human and human-machine communications.

It will re-define the concept of remote education and make management of educational resources much easier since expensive real equipment (often limited in quantity) that is necessary in the past for actual object handling can be replaced by virtual equipment with little to no associated cost. The developed technologies will also enable many sophisticated remote work and maintenance tasks and thereby leverage a limited pool of expert resources.